Welcome to Skyzhou's Notes

ようこそいらっしゃいました!

目前已经有的笔记:

有些笔记可能 $\LaTeX$ 一下子加载不出来,需要手动刷新一下

非常抱歉!

CSC3001 Discrete Mathematics

L0 Introduction

Main Course Topics

- Propositional Logic

- Sets, First-Order Logic

- Methods of Proofs

- Mathematical Induction

- Recursion

- Greatest Common Divisors

- Modular Arthmetic

- Chinese Remainder Theorem

- Graphs

- Graph Matching

- Graph Coloring

- Combinatorial Proofs and its Principles

- Counting by Mapping

- Cardinality

L1 Propositional Logic

Statement (Proposition)

A Statement is a sentence that is either True or False.

e.g. $2+2=4 \to \text{True}$

Non e.g. $x+y>0$, cause they are true for some values of $x$ and $y$, but false for others.

Logic Operators

NOT $\lnot$

Alternative notation: $\lnot P = \overline{P}$

| $P$ | $\lnot P$ |

|---|---|

| T | F |

| F | T |

AND $\land$

| $P$ | $Q$ | $P\land Q$ |

|---|---|---|

| T | T | T |

| T | F | F |

| F | T | F |

| F | F | F |

OR $\lor$

| $P$ | $Q$ | $P\lor Q$ |

|---|---|---|

| T | T | T |

| T | F | T |

| F | T | T |

| F | F | F |

XOR $\oplus$

Exclusive OR

| $P$ | $Q$ | $P\oplus Q$ |

|---|---|---|

| T | T | F |

| T | F | T |

| F | T | T |

| F | F | F |

De Morgan's Laws

Logical Equivalence: Two statements have the same truth table.

De Morgan's Laws:

$$ \lnot (P \land Q) \equiv \lnot P \lor \lnot Q $$ $$ \lnot (P \lor Q) \equiv \lnot P \land \lnot Q $$

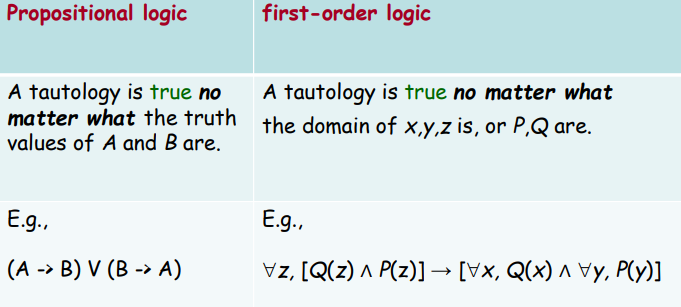

Tautology and Contradiction

A tautology is a statement that is always true.

- e.g. $P \lor \lnot P$

A contradiction is a statement that is always false. (Negation of a tautology)

- e.g. $P \land \lnot P$

Conditinal Statement

If $\to$

If P then Q: $P \to Q \equiv \lnot P \lor Q$

| $P$ | $Q$ | $P \to Q$ |

|---|---|---|

| T | T | T |

| T | F | F |

| F | T | T |

| F | F | T |

Convention: If we don't say anything wrong, then it is not false, and thus true.

Negation of If-Then: $\lnot(P \to Q) \equiv P \land \lnot Q$

Contrapositive: The contrapositive of $P \to Q$ is $\lnot Q \to \lnot P$

$$ P \to Q \equiv \lnot P \lor Q \equiv Q \lor \lnot P \equiv \lnot Q \to \lnot P $$

If and Only If $\iff$

P if Q means "if Q then P", or "Q implies P". We also say Q is a sufficient condition for P.

P only if Q means "if P then Q", or "P implies Q". We also say Q is a necessary condition for P.

P if and only if (iff) Q means P and Q are logically equivalent.

| $P$ | $Q$ | $P \iff Q$ |

|---|---|---|

| T | T | T |

| T | F | F |

| F | T | F |

| F | F | T |

$$ P \iff Q \equiv (P \to Q) \land (Q \to P) \equiv (P \to Q) \land (\lnot P \to \lnot Q) $$

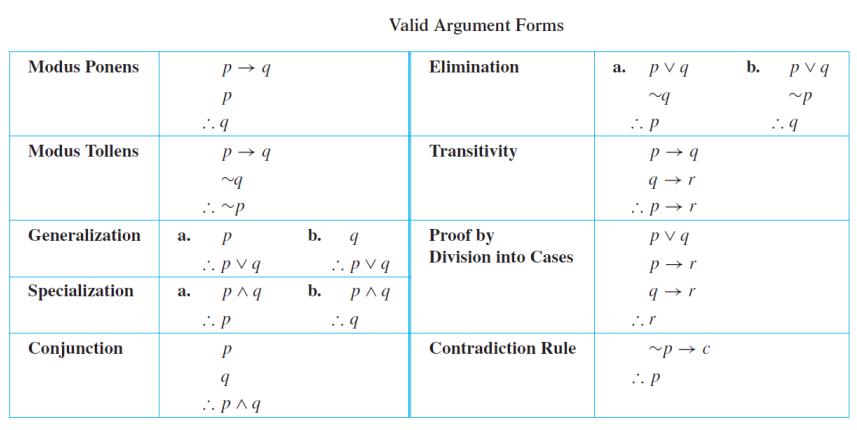

Argument

An argument is a sequence of statements.

All statements but the final one are called assumptions or hypothesis.

The final statement is called the conclusion.

An argument is valid if: whenever all the assumptions are true, then the conclusion is true. Or we say the conclusion follows from the assumptions.

L2 Sets

Basic Definitions

Defining Sets

Set: A set is an unordered collection of distinct objects.

Elements / Members: The objects in a set are called the elements or members of the set S, and we say S contains its elements.

e.g. $S=\{2,3,5,7\} = \{3,7,2,5\}$ (Unordered)

Alternatively, we use the notation $\{x \in A | P(x)\}$ to define the set as the set of elemets, $x$, in $A$ such that $x$ satisfies property $P$.

Classical Sets

- $\mathbb{Z}$: The set of all integers

- $\mathbb{Z^+}$: The set of all positive integers

- $\mathbb{Z^-}$: The set of all negative integers

- $\mathbb{N}$: The set of all nonnegative integers

- $\mathbb{R}$: The set of all real numbers

- $\mathbb{Q}$: The set of all rational numbers

- $\mathbb{C}$: The set of all complex numberse

Membership

The most basic question in set theory is whether an element is in a set.

- $A \subseteq B$: $A$ is an subset of $B$

- $A = B$: $A$ is equal to $B$

- $A \subset B$: $A$ is an proper subset of $B$

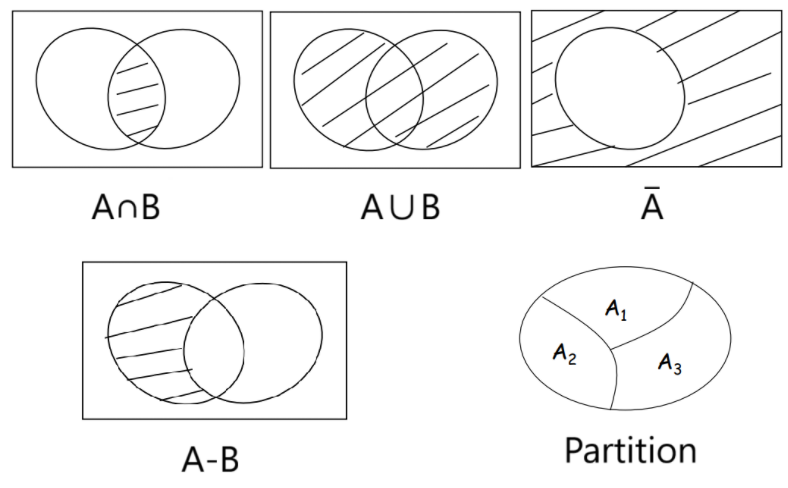

Operations on Sets

Basic Operations on Sets

Intersection: $A \cap B = \{x \in U | x \in A \text{ and } x \in B\}$

Two sets are said to be disjoint if their intersection is an empty set.

Union: $A \cup B = \{x \in U | x \in A \text{ or } x \in B\}$

And we have $|A \cup B| = |A|+|B| - |A \cap B|$

Complement: $\overline{A} = A^c = \{x \in U | x \notin A\}$

And if $A \subseteq B$, then $\overline{B} \subseteq \overline{A}$

Difference: $A - B = \{ x \in U | x \in A \text{ and }x \notin B \}$

And we have $|A-B| = |A| - |A \cap B|$

Big set operators: $$ \begin{align*} \bigcup_{i=0}^n A_i &= \{ x \in A_i \text{ for at least one } i = 0, 1, 2, \cdots, n \} \\ \bigcap_{i=0}^n A_i &= \{ x \in A_i \text{ for all } i = 0, 1, 2, \cdots, n \} \end{align*} $$

Partition of Sets

Partition: A collection of nonempty sets $\{ A_1, A_2, \cdots, A_n \}$ is a partition of a set A if and only if $$ \begin{align*} & A = A_1 \cup A_2 \cup \cdots \cup A_n \\ & A_1, A_2, \cdots, A_n \text{ are mutually disjoint (or pairwise disjoint)} \end{align*} $$

Cartesian Products

Cartesian Products: Given two sets $A$ and $B$, the Cartesian product $A \times B$ is the set of all ordered pairs $(a,b)$, where $a$ is in $A$ and b is in $B$. That is: $$ A \times B = \{(a,b) | a \in A, b \in B\} $$

e.g. Let $A = \{ a, b, c, \cdots, z \}$. Let $B = \{ 0, 1, 2, \cdots, 9 \}$. Then $A \times B = \{ (a,1), (a,2), \cdots, (a, 9), (b, 1), (b, 2), \cdots, (z, 9) \}$

Well...It may seems a bit like the outer product of two vectors is a matrix, but in a set.

Set Identities

Set Laws

Let $A$, $B$ and $C$ be subsets of a universal set $U$, and we have:

- Commutative Law

- $A \cup B = B \cup A$

- $A \cap B = B \cap A$

- Associative Law

- $(A \cup B) \cup C = A \cup (B \cup C)$

- $(A \cap B) \cap C = A \cap (B \cap C)$

- Distributive Law

- $A \cup (B \cap C) = (A \cup B) \cap (A \cup C)$

- $A \cap (B \cup C) = (A \cap B) \cup (A \cap C)$

- Identity Law

- $A \cup \emptyset = A$

- $A \cap U = A$

- Complement Law

- $A \cup A^c = U$

- $A \cap A^c = \emptyset$

- De Morgan's Law

- $(A \cup B)^c = A^c \cap B^c$

- $(A \cap B)^c = A^c \cup B^c$

- Set Difference Law

- $A - B = A \cap B^c$

L3 First-order Logic

Quantifiers

Predicates

Predicate: Propositions (i.e. statments) with variables.

- e.g. $P(x, y): x + 2 = y$

The domain of a variable is the set of all values that may be substituted in place of the variable.

The truth of a quantified statement depends on the domain.

The Universal Quantifier $\forall$

$\forall x$: For ALL $x$

- e.g. $\forall x, x^2 \geq x$

The Existential Quantifier $\exists$

$\exists x$: There EXISTS some $x$ (perhaps one)

- e.g. $\exists x \in \mathbb{Z}^+, x=x^2$

e.g. How to write prime(p) ?

A prime number is a natural number greater than $1$ that has no positive divisors other than $1$ and itself.

- Conditions: $p \not= ab$

- Variables: $p, a, b$

- Domains: $p, a, b > 1, p, a, b \in \mathbb{Z}$

- Add quantifiers and reconcile the order:

$$ (p > 1) \land (p \in \mathbb{Z}) \land (\forall a, b, \in \mathbb{Z}^+, (p \not= ab) \lor (a=1) \lor (a=p)) $$

Negation

One simple equivalence: $$ \lnot \forall x, P(x) \equiv \exists x, \lnot P(x) $$ e.g. Not every like you = There exists someone who doesn't like you.

Multiple Quantifiers

The order of quantifiers is very important.

Arguments of Quantified Statements

Predicate Calculus Validity

A quantified argument is valid if the conclusion is true whenever the assumptions are true.

Arguments with Quantified Statements

Universal Instantiation: $$ \begin{align*} & \forall x , P(x) \\ \therefore \ & P(a) \end{align*} $$

Universal Modus Ponens $$ \begin{align*} & \forall x , (P(x) \to Q(x)) \\ & P(a) \\ \therefore \ & Q(a) \end{align*} $$

Universal Modus Tollens: $$ \begin{align*} & \forall x, P(x) \to Q(x) \\ & \lnot Q(A) \\ \therefore \ & \lnot P(A) \end{align*} $$

Universal Generalization: $$ \frac{A \to R(c)}{A \to \forall x , R(x)} \qquad \\ \text{(valid rule, provided $c$ is independent of $A$)} $$

CSC3200 Data Structures and Advanced Programming

Introduction

Teaching C++ first.

Then data sturctures.

Assignment 1

Trigonometric Functions

Implement some of the trigonometric functions from scratch without using any mathematical functions from STL.

student_math.h

#ifndef STUDENT_MATH_H

#define STUDENT_MATH_H

namespace student_std {

double sin(double x); //x is an angle in radian

double sin_deg(double x); //x is an angle in degree

double cos(double x); //x is an angle in radian

double cos_deg(double x); //x is an angle in degree

double tan(double x); //x is an angle in radian

double tan_deg(double x); //x is an angle in degree

double cot(double x); //x is an angle in radian

double cot_deg(double x); //x is an angle in degree

}

#endif

student_math.cpp

#include "student_math.h"

const double pi = 3.1415926535897932384626;

const double esp = 1e-8;

double toRadian(double x) {

return pi*x/180.0;

}

double dcmp(double x) {

return (x < -esp ? -1 : (x > esp ? 1 : 0));

}

double fabs(double x) {

return (dcmp(x) == -1 ? -x : x);

}

double TrigonometricLagrange(double x, bool issin) {

while(x > 2.0*pi) x -= 2.0*pi;

while(x < -2.0*pi) x += 2.0*pi;

double numerator = 1, denominator = 1;

double timescnt = 1;

if(issin) {

numerator = x;

timescnt = 2;

}

double current = numerator/denominator, last = numerator/denominator;

double re = current;

while(fabs(current) > esp) {

current = current*x*x/(timescnt)/(timescnt+1.0)*(-1.0);

timescnt += 2;

re += current;

}

return (fabs(re) > esp ? re : 0);

}

namespace student_std {

double sin(double x) {

return TrigonometricLagrange(x, 1);

}

double sin_deg(double x) {

return sin(toRadian(x));

}

double cos(double x) {

return TrigonometricLagrange(x, 0);

}

double cos_deg(double x) {

return cos(toRadian(x));

}

double tan(double x) {

return sin(x)/cos(x);

}

double tan_deg(double x) {

return sin(toRadian(x))/cos(toRadian(x));

}

double cot(double x) {

return 1.0/tan(x);

}

double cot_deg(double x) {

return 1.0/tan(toRadian(x));

}

}

String

Implement a C++ string class from scratch without using STL.

student_string.h

#ifndef STUDENT_STRING_H

#define STUDENT_STRING_H

#define MAXLEN 256

namespace student_std {

class string {

public:

string();

string(const char* str);

string(string const&);

~string();

int get_strlen() const;

const char* get_c_str() const;

void strcat(string const&);

string& operator=(string const&);

string& operator+=(string const&);

char& operator[](int);

const char& operator[](int) const;

void to_upper();

void to_lower();

void strcopy(string const&);

bool equals(string const&) const;

bool equals_ignore_case(string const&) const;

void trim(); // Removes spaces ' ' from beginning and end

bool is_alphabetic() const;

private: // hint

char c_str[MAXLEN];

int strlen;

};

}

#endif

student_string.cpp

#include "student_string.h"

namespace student_std {

string::string() {

this->c_str[0] = '\0';

this->strlen = 0;

}

string::string(const char* str) {

int len = 0;

while(str[len] != '\0') {

len++;

}

this->strlen = len;

for(int i = 0; i <= len; i++) {

this->c_str[i] = str[i];

}

}

string::string(string const& str) {

this->strlen = str.strlen;

for(int i = 0; i <= this->strlen; i++) {

this->c_str[i] = str.c_str[i];

}

}

string::~string() {}

int string::get_strlen() const {

return this->strlen;

}

const char* string::get_c_str() const {

return this->c_str;

}

void string::strcat(string const& str) {

int len = this->strlen;

for(int i = 0; i <= str.strlen; i++) {

this->c_str[i+len] = str.c_str[i];

}

this->strlen = len+str.strlen;

}

string& string::operator=(string const& str) {

if(this == &str) {

return *this;

}

this->strlen = str.strlen;

for(int i = 0; i <= this->strlen; i++) {

this->c_str[i] = str.c_str[i];

}

return *this;

}

string& string::operator+=(string const& str) {

this->strcat(str);

return *this;

}

char& string::operator[](int i) {

return this->c_str[i];

}

const char& string::operator[](int i) const {

return this->c_str[i];

}

void string::to_upper() {

for(int i = 0; i < this->strlen; i++) {

if(this->c_str[i] >= 'a' && this->c_str[i] <= 'z') {

this->c_str[i] = char(c_str[i]+('A'-'a'));

}

}

}

void string::to_lower() {

for(int i = 0; i < this->strlen; i++) {

if(this->c_str[i] >= 'A' && this->c_str[i] <= 'Z') {

this->c_str[i] = char(c_str[i]+('a'-'A'));

}

}

}

void string::strcopy(string const& str) {

*this = str;

}

bool string::equals(string const& str) const {

if(this->strlen != str.strlen) return false;

for(int i = 0; i < this->strlen; i++) {

if(this->c_str[i] != str.c_str[i]) return false;

}

return true;

}

bool string::equals_ignore_case(string const& str) const {

string a = *this, b = str;

a.to_lower();

b.to_lower();

return a.equals(b);

}

void string::trim() {

int l = 0, r = this->strlen-1;

while(this->c_str[l] == ' ') {

l++;

}

while(this->c_str[r] == ' ') {

r--;

}

for(int i = 0; i < r-l+1; i++) {

this->c_str[i] = this->c_str[i+l];

}

for(int i = r-l+1; i <= this->strlen; i++) {

this->c_str[i] = '\0';

}

this->strlen = r-l+1;

}

bool string::is_alphabetic() const {

for(int i = 0; i < this->strlen; i++) {

if(!((this->c_str[i] >= 'a' && this->c_str[i] <= 'z') || (this->c_str[i] >= 'A' && this->c_str[i] <= 'Z'))) {

return false;

}

}

return true;

}

}

Assignment 2

Vector

Implement a C++ vector (dynamic array) from scratch without using STL.

student_vector.h

#ifndef STUDENT_VECTOR_H

#define STUDENT_VECTOR_H

#include <cstddef>

#include <cassert>

#include <stdexcept>

#include <iterator>

namespace student_std {

template <typename T>

class vector {

public:

using size_type = std::size_t;

using difference_type = std::ptrdiff_t;

using value_type = T;

private:

T* s;

size_type sz;

size_type cap;

void reallocate(size_type newcap) {

if(newcap < sz) newcap = sz;

T* news = new T[newcap];

for(size_type i = 0; i < sz; i++) {

news[i] = s[i];

}

delete[] s;

s = news;

cap = newcap;

}

public:

vector() {

s = nullptr;

sz = 0;

cap = 0;

}

vector(const vector& other) {

s = nullptr;

sz = other.sz;

cap = other.cap;

s = new T[cap];

for(size_type i = 0; i < sz; i++) {

s[i] = other.s[i];

}

}

vector& operator= (const vector& other) {

if(this == &other) return *this;

delete[] s;

sz = other.sz;

cap = other.cap;

s = new T[cap];

for(size_type i = 0; i < sz; i++) {

s[i] = other.s[i];

}

return *this;

}

~vector() {

delete[] s;

}

size_type size() const {

return sz;

}

size_type capacity() const {

return cap;

}

bool empty() const {

return sz == 0;

}

const T* data() const {

return s;

}

const T& at(size_type p) const {

if(p >= sz) throw std::out_of_range("vector::at out of range");

else return s[p];

}

const T& operator[] (size_type p) const {

assert(p >= 0 && p < sz);

return s[p];

}

const T& front() const {

assert(sz > 0);

return s[0];

}

const T& back() const {

assert(sz > 0);

return s[sz-1];

}

void reserve(size_type newcap) {

if(newcap > cap) {

reallocate(newcap);

}

}

void push_back(const T& val) {

if(sz == cap) {

size_type newcap = (cap == 0 ? 1 : cap * 2);

reserve(newcap);

}

s[sz] = val;

sz++;

}

void pop_back() {

assert(sz > 0);

sz--;

}

void resize(size_type newsz, const T& val) {

if(newsz > cap) {

reserve(newsz);

}

if(newsz > sz) {

for(size_type i = sz; i < newsz; i++) {

s[i] = val;

}

}

sz = newsz;

}

void resize(size_type newsz) {

resize(newsz, T());

}

void clear() {

sz = 0;

}

void swap(vector& other) {

std::swap(s, other.s);

std::swap(sz, other.sz);

std::swap(cap, other.cap);

}

T* data() {

return s;

}

T& at(size_type p) {

if(p >= sz) throw std::out_of_range("vector::at out of range");

else return s[p];

}

T& operator[] (size_type p) {

assert(p >= 0 && p < sz);

return s[p];

}

T& front() {

assert(sz > 0);

return s[0];

}

T& back() {

assert(sz > 0);

return s[sz-1];

}

public:

class iterator {

public:

using difference_type = std::ptrdiff_t;

using value_type = T;

using pointer = T*;

using reference = T&;

using iterator_category = std::random_access_iterator_tag;

private:

pointer ptr;

public:

iterator() {

ptr = nullptr;

}

iterator(pointer p) {

ptr = p;

}

reference operator*() const {

return *ptr;

}

pointer operator->() const {

return ptr;

}

iterator& operator++() {

ptr++;

return *this;

}

iterator operator++(int) {

iterator tmp = *this;

ptr++;

return tmp;

}

iterator& operator--() {

ptr--;

return *this;

}

iterator operator--(int) {

iterator tmp = *this;

ptr--;

return tmp;

}

iterator& operator+=(difference_type num) {

ptr += num;

return *this;

}

iterator& operator-=(difference_type num) {

ptr -= num;

return *this;

}

iterator operator+(difference_type num) const {

return iterator(ptr+num);

}

iterator operator-(difference_type num) const {

return iterator(ptr-num);

}

difference_type operator-(const iterator& other) const {

return ptr-other.ptr;

}

bool operator==(const iterator& other) const{

return ptr == other.ptr;

}

bool operator!=(const iterator& other) const{

return ptr != other.ptr;

}

bool operator<=(const iterator& other) const{

return ptr <= other.ptr;

}

bool operator>=(const iterator& other) const{

return ptr >= other.ptr;

}

bool operator<(const iterator& other) const{

return ptr < other.ptr;

}

bool operator>(const iterator& other) const{

return ptr > other.ptr;

}

};

public:

iterator begin() {

return iterator(s);

}

iterator end() {

return iterator(s+sz);

}

iterator erase(iterator it) {

assert(it >= begin() && it < end());

T* p = &(*it);

for(T* i = p; i+1 < s+sz; i++) {

*i = *(i+1);

}

sz--;

return iterator(p);

}

iterator erase(iterator it1, iterator it2) {

assert(it1 >= begin() && it1 < it2 && it2 <= end());

T* p1 = &(*it1);

T* p2 = &(*it2);

size_type erasecnt = p2 - p1;

for(T* i = p1; i+erasecnt < s + sz; i++) {

*i = *(i+erasecnt);

}

sz -= erasecnt;

return iterator(p1);

}

iterator insert(iterator it, const T& val) {

assert(it >= begin() && it <= end());

size_type p = it - begin();

if(sz == cap) reserve(cap == 0 ? 1 : cap * 2);

it = iterator(s + p);

for(size_type i = sz; i > p; i--) {

s[i] = s[i-1];

}

s[p] = val;

sz++;

return it;

}

};

}

#endif

Assignment 3

Maze

Too simple.

Priority Queue

Priority queue with list and $O(n)$ operation ??? What the fk are you doing CUHKSZ

student_priority_queue.h

#ifndef STUDENT_PRIORITY_QUEUE_H

#define STUDENT_PRIORITY_QUEUE_H

#include <list>

namespace student_std {

template <typename T, typename Container = std::list<T>>

class priority_queue {

public:

using container_type = Container;

using value_type = typename Container::value_type;

using size_type = typename Container::size_type;

// ...

private:

Container c;

static bool cmp(T a, T b) {

return b < a;

}

public:

priority_queue() = default;

priority_queue(const priority_queue &other) {

c = other.c;

}

priority_queue &operator = (const priority_queue &other) {

if(this != &other) c = other.c;

return this;

}

value_type const& top() const {

return *c.begin();

}

void pop() {

c.erase(c.begin());

}

size_type size() const {

return c.size();

}

bool empty() const {

return c.empty();

}

void push(const value_type &val) {

auto it = c.begin();

while(it != c.end() && val < *it) it++;

c.insert(it, val);

}

void swap(priority_queue &other) {

c.swap(other.c);

}

};

}

#endif

Assignment 4

Unordered Map

Implement an unordered map using bucket hashing.

Bucket type: vector and list

student_unordered_map.h

#ifndef STUDENT_UNORDERED_MAP_H

#define STUDENT_UNORDERED_MAP_H

#include <vector>

#include <list>

#include <functional>

#include <utility>

#include <cassert>

namespace student_std {

template <typename Key, typename T, typename Hash = std::hash<Key>>

class unordered_map {

public:

using key_type = Key;

using mapped_type = T;

using size_type = std::size_t;

using difference_type = std::ptrdiff_t;

using value_type = std::pair<Key, T>;

using hasher = Hash;

using reference = value_type&;

using const_reference = const value_type&;

private:

std::vector <std::list <value_type>> buckets;

size_type sz = 0;

hasher hsh;

size_type index_for(Key const& k) const {

return hsh(k) % buckets.size();

}

double load_factor() const {

return double(sz) / double(buckets.size());

}

void rehash(size_type newsz) {

auto tmp = std::move(buckets);

buckets = std::vector <std::list <value_type>> (newsz);

for(auto& lst : tmp) {

for(auto& x : lst) {

size_type idx = index_for(x.first);

buckets[idx].push_back(std::move(x));

}

}

}

public:

unordered_map(size_type init_sz = 8) : buckets(init_sz) {}

size_type size() const {

return sz;

}

bool empty() const {

return sz == 0;

}

size_type bucket_count() const {

return buckets.size();

}

bool contains(key_type const& k) const {

size_type idx = index_for(k);

for(auto const& x : buckets[idx]) {

if(x.first == k) {

return true;

}

}

return false;

}

void clear() {

for(auto& x : buckets) {

x.clear();

}

sz = 0;

}

size_type erase(key_type const& k) {

size_type idx = index_for(k);

auto& lst = buckets[idx];

for(auto it = lst.begin(); it != lst.end(); it++) {

if(it->first == k) {

lst.erase(it);

sz--;

return 1;

}

}

return 0;

}

T& operator [](key_type const& k) {

size_type idx = index_for(k);

auto& lst = buckets[idx];

for(auto& x : lst) {

if(x.first == k) {

return x.second;

}

}

lst.emplace_back(k, T());

sz++;

if(load_factor() >= 2.0) {

rehash(buckets.size()*2);

}

idx = index_for(k);

auto& nlst = buckets[idx];

for(auto& x : nlst) {

if(x.first == k) {

return x.second;

}

}

assert(false);

static T re{};

return re;

}

};

}

#endif

BST's Inorder Iterator

Implement the binary tree traversal inorder forward iterator from scratch.

student_inorder_iterator.h

#ifndef STUDENT_INORDER_ITERATOR_H

#define STUDENT_INORDER_ITERATOR_H

namespace student_std {

template <typename BinaryTree>

class inorder_iterator {

public:

using value_type = typename BinaryTree::value_type;

using difference_type = std::ptrdiff_t;

using iterator_category = std::forward_iterator_tag;

using reference = value_type const&;

using pointer = value_type const*;

private:

BinaryTree const* cur;

public:

inorder_iterator() : cur(nullptr) {}

inorder_iterator(BinaryTree const* node) {

cur = node;

if(cur) {

while(cur->left()) cur = cur->left();

}

}

reference operator*() const {

return cur->value();

}

pointer operator->() const {

return &(cur->value());

}

inorder_iterator& operator++() {

if(!cur) return *this;

if(cur->right()) {

cur = cur->right();

while(cur->left()) cur = cur->left();

return *this;

}

auto p = cur->parent();

while(p && p->right() == cur) {

cur = p;

p = p->parent();

}

cur = p;

return *this;

}

inorder_iterator operator++(int) {

inorder_iterator tmp = *this;

++(*this);

return tmp;

}

bool operator==(inorder_iterator const& other) const {

return cur == other.cur;

}

bool operator!=(inorder_iterator const& other) const {

return !(*this == other);

}

};

}

#endif

Assignment 5

AVL Tree

Implement an AVL Tree from scratch.

student_avl_tree.h

#ifndef STUDENT_AVL_TREE_H

#define STUDENT_AVL_TREE_H

#include <algorithm>

#include <functional>

#include <utility>

#include <cstddef>

#include <iterator>

namespace student_std {

template <typename Key, typename Comp = std::less<Key>>

class avl_tree {

class avl_node {

public:

using size_type = std::size_t;

using difference_type = std::ptrdiff_t;

avl_node(const Key& k, avl_node* p = nullptr) :

m_key(k), m_parent(p), m_left(nullptr), m_right(nullptr), m_size(1), m_height(0) {}

Key const& value() const { return m_key; };

avl_node const* parent() const { return m_parent; };

avl_node const* left() const { return m_left; };

avl_node const* right() const{ return m_right; };

size_type size() const { return m_size; }

std::ptrdiff_t height() const { return m_height; }

private:

size_type m_size;

std::ptrdiff_t m_height;

Key m_key;

avl_node* m_parent;

avl_node* m_left;

avl_node* m_right;

friend class avl_tree;

};

class iterator {

public:

using value_type = avl_node;

using reference = value_type const&;

using pointer = value_type const*;

using difference_type = std::ptrdiff_t;

using iterator_category = std::bidirectional_iterator_tag;

iterator(avl_node* node = nullptr) : m_node{node} {}

iterator(pointer node) : m_node{const_cast<avl_node*>(node)} {}

iterator& operator++() { // O(log n)

if (m_node == nullptr) return *this;

if (m_node->m_right) {

m_node = m_node->m_right;

while (m_node->m_left) {

m_node = m_node->m_left;

}

} else {

avl_node* p = m_node->m_parent;

while (p && m_node == p->m_right) {

m_node = p;

p = p->m_parent;

}

m_node = p;

}

return *this;

}

iterator operator++(int) { // O(log n)

iterator tmp = *this;

++(*this);

return tmp;

}

iterator& operator--() { // O(log n)

if (m_node == nullptr) return *this;

if (m_node->m_left) {

m_node = m_node->m_left;

while (m_node->m_right) {

m_node = m_node->m_right;

}

} else {

avl_node* p = m_node->m_parent;

while (p && m_node == p->m_left) {

m_node = p;

p = p->m_parent;

}

m_node = p;

}

return *this;

}

iterator operator--(int) { // O(log n)

iterator tmp = *this;

--(*this);

return tmp;

}

reference operator*() const { // O(1)

return *m_node;

}

pointer operator->() const { // O(1)

return m_node;

}

bool operator==(iterator const& other) const {

return m_node == other.m_node;

}

bool operator!=(iterator const& other) const {

return m_node != other.m_node;

}

private:

avl_node* m_node;

friend class avl_tree;

};

public:

using key_type = Key;

using node_type = avl_node;

using size_type = std::size_t;

using comparison = Comp;

using const_iterator = iterator;

avl_tree() : m_root(nullptr), m_comp(comparison()) {}

~avl_tree() { clear(m_root); }

iterator insert(Key const& key) { // O(log n)

if (!m_root) {

m_root = new avl_node(key);

return iterator(m_root);

}

avl_node* current = m_root;

avl_node* parent = nullptr;

while (current) {

parent = current;

if (m_comp(key, current->m_key)) {

current = current->m_left;

} else if (m_comp(current->m_key, key)) {

current = current->m_right;

} else {

return iterator(current);

}

}

avl_node* new_node = new avl_node(key, parent);

if (m_comp(key, parent->m_key)) {

parent->m_left = new_node;

} else {

parent->m_right = new_node;

}

rebalance_path(new_node);

return iterator(new_node);

}

iterator erase(Key const& key) { // O(log n)

avl_node* node = find_node(key);

if (!node) return end();

avl_node* node_to_delete = node;

avl_node* successor_to_return = nullptr;

if (node->m_right) {

successor_to_return = node->m_right;

while (successor_to_return->m_left) {

successor_to_return = successor_to_return->m_left;

}

} else {

avl_node* p = node->m_parent;

while (p && node == p->m_right) {

node = p;

p = p->m_parent;

}

successor_to_return = p;

}

if (node_to_delete->m_left && node_to_delete->m_right) {

avl_node* successor_swap = node_to_delete->m_right;

while (successor_swap->m_left) {

successor_swap = successor_swap->m_left;

}

node_to_delete->m_key = successor_swap->m_key;

node_to_delete = successor_swap;

}

avl_node* child = (node_to_delete->m_left) ? node_to_delete->m_left : node_to_delete->m_right;

avl_node* parent_of_deleted = node_to_delete->m_parent;

avl_node* rebalance_start_node = parent_of_deleted;

if (child) {

child->m_parent = parent_of_deleted;

}

if (!parent_of_deleted) {

m_root = child;

} else {

if (parent_of_deleted->m_left == node_to_delete) {

parent_of_deleted->m_left = child;

} else {

parent_of_deleted->m_right = child;

}

}

delete node_to_delete;

if (rebalance_start_node) {

rebalance_path(rebalance_start_node);

}

return iterator(successor_to_return);

}

iterator find(Key const& key) const { // O(log n)

avl_node* res = find_node(key);

return iterator(res);

}

bool contains(Key const& key) const { // O(log n)

return find_node(key) != nullptr;

}

size_type size() const { // O(1)

return m_root ? m_root->m_size : 0;

}

std::ptrdiff_t height() const { // O(1)

return m_root ? m_root->m_height : -1;

}

std::ptrdiff_t getBL() const {

return get_balance_factor(m_root);

}

iterator begin() const { // O(log n)

if (!m_root) return iterator(static_cast<avl_node*>(nullptr));

avl_node* curr = m_root;

while (curr->m_left) {

curr = curr->m_left;

}

return iterator(curr);

}

iterator end() const {

return iterator(static_cast<avl_node*>(nullptr));

}

iterator root() const { // O(1)

return iterator(m_root);

}

private:

avl_node* m_root;

comparison m_comp;

avl_node* find_node(Key const& key) const { // O(log n)

avl_node* curr = m_root;

while (curr) {

if (m_comp(key, curr->m_key)) {

curr = curr->m_left;

} else if (m_comp(curr->m_key, key)) {

curr = curr->m_right;

} else {

return curr;

}

}

return nullptr;

}

void clear(avl_node* node) {

if (node) {

clear(node->m_left);

clear(node->m_right);

delete node;

}

}

std::ptrdiff_t get_height(avl_node* n) const {

return n ? n->m_height : -1;

}

size_type get_size(avl_node* n) const {

return n ? n->m_size : 0;

}

std::ptrdiff_t get_balance_factor(avl_node* n) const {

if (!n) return 0;

return get_height(n->m_left) - get_height(n->m_right);

}

void update_stats(avl_node* n) {

if (n) {

n->m_height = 1 + std::max(get_height(n->m_left), get_height(n->m_right));

n->m_size = 1 + get_size(n->m_left) + get_size(n->m_right);

}

}

void rotate_right(avl_node* y) {

avl_node* x = y->m_left;

avl_node* T2 = x->m_right;

x->m_right = y;

y->m_left = T2;

x->m_parent = y->m_parent;

y->m_parent = x;

if (T2) T2->m_parent = y;

if (x->m_parent) {

if (x->m_parent->m_left == y) x->m_parent->m_left = x;

else x->m_parent->m_right = x;

} else {

m_root = x;

}

update_stats(y);

update_stats(x);

}

void rotate_left(avl_node* x) {

avl_node* y = x->m_right;

avl_node* T2 = y->m_left;

y->m_left = x;

x->m_right = T2;

y->m_parent = x->m_parent;

x->m_parent = y;

if (T2) T2->m_parent = x;

if (y->m_parent) {

if (y->m_parent->m_left == x) y->m_parent->m_left = y;

else y->m_parent->m_right = y;

} else {

m_root = y;

}

update_stats(x);

update_stats(y);

}

void rebalance_path(avl_node* node) {

while (node) {

update_stats(node);

std::ptrdiff_t balance = get_balance_factor(node);

// Left Heavy

if (balance > 1) {

if (get_balance_factor(node->m_left) < 0) {

rotate_left(node->m_left); // LR

}

rotate_right(node); // LL or LR

}

// Right Heavy

else if (balance < -1) {

if (get_balance_factor(node->m_right) > 0) {

rotate_right(node->m_right); // RL

}

rotate_left(node); // RR or RL

}

node = node->m_parent;

}

}

};

}

#endif

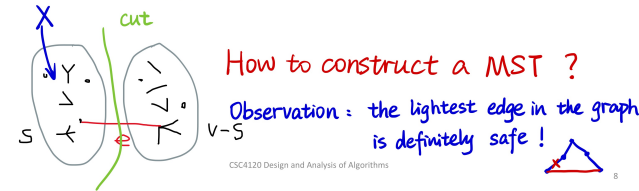

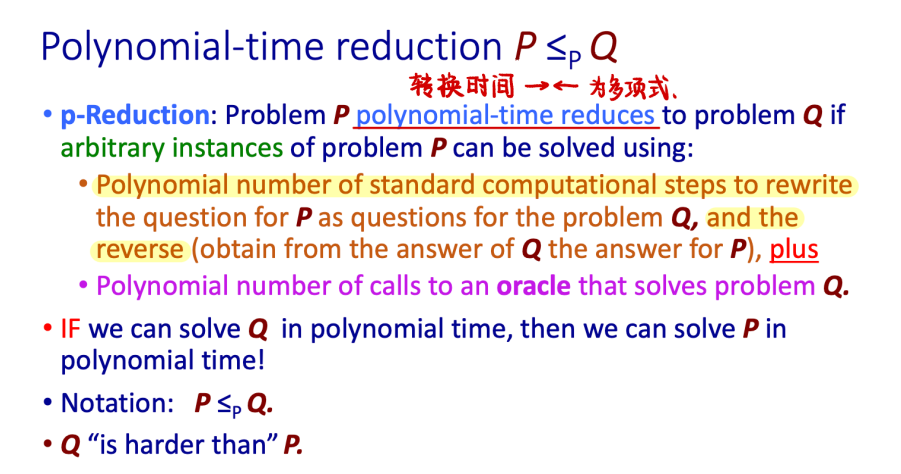

CSC4120 Design and Analysis of Algorithms

L0 Introduction

Main Course Topics

- Key algorithm concepts (asymptotic complexity, divide-and-conquer)

- Basic data structure algorithms

- Heaps (priority queues)

- Binary seach trees (scheduling)

- Hashing (cryptography, dictionaries)

- Graph searches (reachability analysis)

- Shortest paths (Google maps, navigation)

- Greedy algorithms (minimum spanning trees, Huffman codes)

- Dynamic programming (any optimization problem)

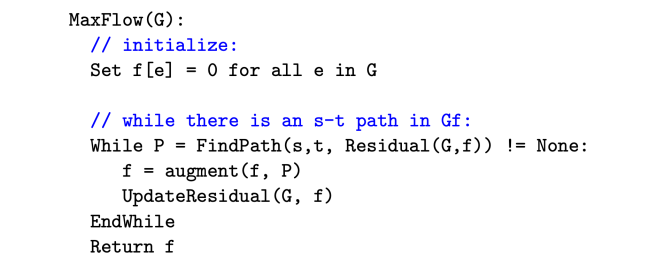

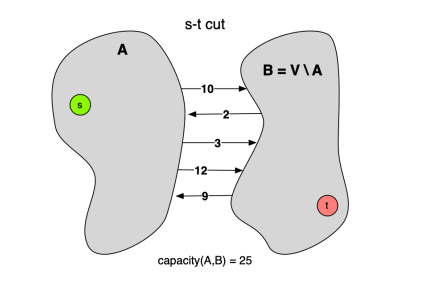

- Network flows

- Compleity (NP-complete, poly reductions, approximation algorithms)

Textbook: Algorithms by S. Dasgupta, C.H. Papadimitriou, and U.V. Vazirani.

L1 Basic Concepts of Algorithmic Performance

Asymptotic Complexity

What is $T(n)$?

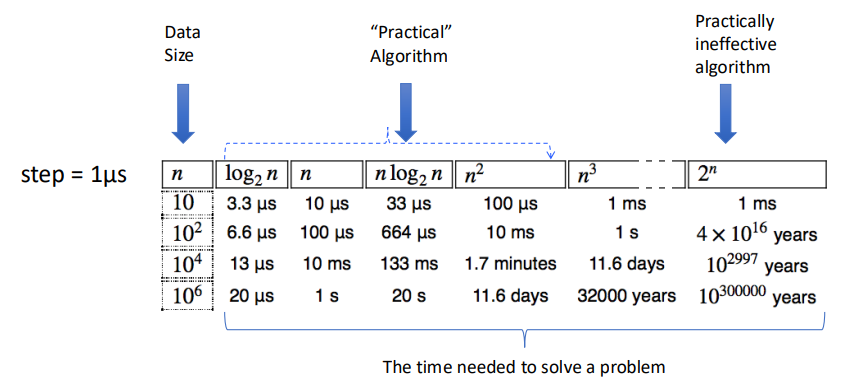

$T(n)$ is the largest (worst) execution time over all inputs of size $n$.

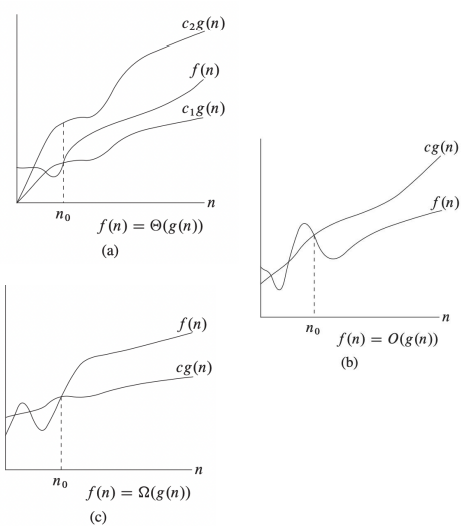

Asymptotic Complexity

Goal: Capture the growth of $T(n)$ of an algorithm as the problem instance size $n \rightarrow \infty$

Key Idea: Keep only the rate of growth, i.e., the dominant term when $n \rightarrow \infty$, forgetting multiplicative constants and lower order terms.

Three Os

-

$\Theta$: Grows asymptotically as fast as ($=$)

- Tight Bound

- $f(n) = \Theta(g(n)) \Leftrightarrow \exists c_1, c_2 > 0, n_0 \text{ s.t. } c_1 g(n) \leq f(n) \leq c_2 g(n) \text{ for } n \geq n_0$

- e.g. $100n^2 + 5n - 5 = 0.1n^2 + 10n^{1.5} - 5 = \Theta(n^2)$

-

$O$: Grows asymptotically at most as fast as ($\leq$)

- Upper Bound

- $f(n) = O(g(n)) \Leftrightarrow \exists c > 0, n_0 \text{ s.t. } f(n) \leq c g(n) \text{ for } n \geq n_0$

- e.g. $2n^{1.5}-5 \leq n^{1.5} \leq n^2 \Rightarrow 2n^{1.5}-5 = O(n^{1.5}), = O(n^2)$

- e.g. $n^{1.5} \leq n^2 \leq 2^n \Rightarrow n^{1.5} = O(n^2), n^2 = O(2^n), n^{1.5} = O(2^n)$

-

$\Omega$: Grows asymptotically at least as fast as ($\geq$)

- Lower Bound

- $f(n) = \Omega(g(n)) \Leftrightarrow \exists c > 0, n_0 \text{ s.t. } f(n) \geq c g(n) \text{ for } n \geq n_0$

- e.g. $5 \leq 2n \leq n^{1.5}+1 \leq 2^n \Rightarrow 2n = \Omega(1), 2^n = \Omega(n^{1.5})$

In Practice

Conclusions

- To measure the performance of algorithms we measure their asymptotic complexity.

- Big $O$ is used to describe an upper bound for the running time of an algorithm.

- Big $\Omega$ is used to describe a lower bound for the running time of an algorithm.

- Big $\Theta$ is used to denote asymptotic equivalence of the running times of two algorithms. And when $\Omega(g(n)) = O(g(n)) = f(n)$, we can have $\Theta(g(n)) = f(n)$

L2 Divide and Conquer

Divide and Conquer (Sorting and Master Theorem)

Basic Devide and Conquer

There are 3 steps:

- Divide input into multiple smaller (usually disjoint) points.

- Conquer each of the parts separatelly (using recursive calls).

- Combine results from different calls.

$$ T(n) = \text{divide time} \\ +T(n_1) + T(n_2) + \cdots + T(n_k) \\ +\text{combine time} $$

Sorting

A non-D&C solution: Insertion Sort

void insertionSort(int arr[], int n) {

for (int i = 1; i < n; i++) {

int key = arr[i];

int j = i - 1;

while (j >= 0 && arr[j] > key) {

arr[j + 1] = arr[j];

j--;

}

arr[j + 1] = key;

}

}

We have the complexity is $O(n^2)$ since it runs two loops with $n$ steps.

But $O(n^2)$ is too slow for most of the problem, can it be much faster? Of course. We can use divide and conquer here!

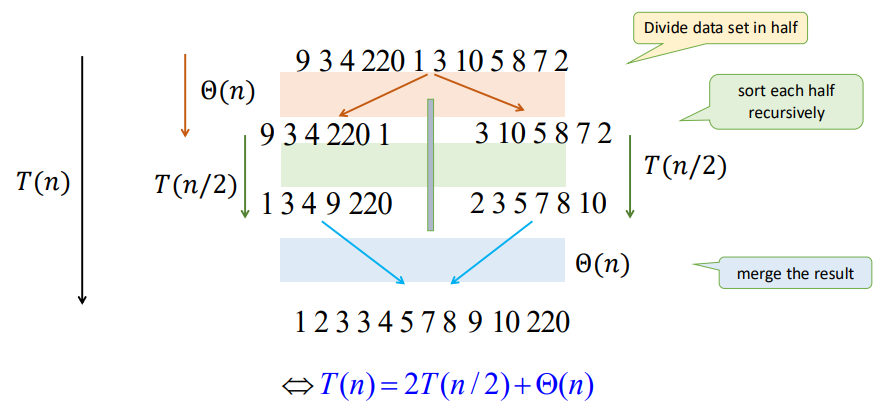

A D&C solution: Merge Sort

void merge(vector<int>& arr, int l, int m, int r) {

int n1 = m - l + 1;

int n2 = r - m;

vector<int> L(n1), R(n2);

for (int i = 0; i < n1; i++) L[i] = arr[l + i];

for (int j = 0; j < n2; j++) R[j] = arr[m + 1 + j];

int i = 0, j = 0, k = l;

while (i < n1 && j < n2) {

if (L[i] <= R[j]) arr[k++] = L[i++];

else arr[k++] = R[j++];

}

while (i < n1) arr[k++] = L[i++];

while (j < n2) arr[k++] = R[j++];

}

void mergeSort(vector<int>& arr, int l, int r) {

if (l < r) {

int m = l + (r - l) / 2;

mergeSort(arr, l, m);

mergeSort(arr, m + 1, r);

merge(arr, l, m, r);

}

}

From the function mergeSort we can see that we divide the array into $2$ parts and conquer them seperately and recursively. And the merge function has the time complexity of $\Theta(n)$.

From the graph we can see that there is only $\log n$ levels of recursion. Thus, we have

$$ \begin{align*} T(n) &= 2T(n/2) + cn \\ &= (cn) \log n + nT(1) \\ &= \Theta (n \log n) \end{align*} $$

Where $cn$ denotes for the time of divide and merge, and $T(1)$ represents for the node of the recursion tree.

Geometric Sums

Consider a geometric series $$ S = 1 + x + x^2 + \cdots + x^h = \frac{x^{x+1}-1}{x-1} \ \ (\text{for } x \not= 1) $$ If $x < 1$, then $$ 1 + x + x^2 + \cdots + x^h \in \left[ 1, \frac{1}{1-x} \right] \Rightarrow S = \Theta(1) $$ If $x > 1$ , then $$ 1 + x + x^2 + \cdots + x^h < \frac{1}{1-x} x^h \Rightarrow S = \Theta(x^h) $$

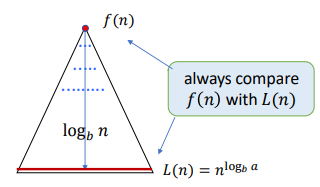

Master Theorem

Consider the recursion:

$$ T(n) = aT(n/b) + f(n) $$

- Number of levels: $\log_b n = \Theta(\log n)$

- Number of leaves: $L = a^{\log_b n} = n^{log_b a}$

We use the recursion tree to calculate the cost. The cost of the $i$-th (root $i=0$) is: $$ \begin{align*} C_i &= (\text{Nodes Number of } i \text{-th Level}) \times (f \text{ of Every Subproblem}) \\ &= a^i f(n/b^i) \end{align*} $$ So that, we have: $$ T(n) = \sum_{i=0}^h C_i = \sum_{i=0}^{\log_b n} a^i f(n/b^i) $$

We need to compare the time within root and leaves to get the main factor. The usual method is to compare $f(n)$ and $L(n) = n^{\log_b a}$

There are 3 situations here:

-

Geometrically Increasing (Leaves): $$ f(n) = O(L^{1-\epsilon}) \Rightarrow T(n) = \Theta(L) $$ e.g. $T(n) = 2T(n/2) + \sqrt{n}$, we have $a=2, b=2, L=n^{\log_b a} = n$, and $\sqrt{n} = n^{1/2} = O(L^{1-\epsilon})$, thus $T(n) = \Theta(n)$

-

Roughly Equal Levels: $$ f(n) = \Theta(L) \Rightarrow T(n) = \Theta (L \log n) \\ f(n) = \Theta(L \log n) \Rightarrow T(n) = \Theta (L \log^2 n) $$ e.g. $T(n) = 2T(n/2) + n$, we have $L=n^{\log_2 2} = n$, and $n = O(L)$, thus $T(n) = \Theta(n \log n)$

-

Geometrically Decreasing (Root) + Regularly Condition $$ f(n) = \Omega(L^{1+\epsilon}) \text{ and } af(n/b) \leq sf(n), s < 1 \Rightarrow T(n) = \Theta(f(n)) $$ e.g. $T(n) = 2T(n/2) + n^2$, we have $L=n^{\log_2 2} = n$, and $n^2 > O(L), 2f(n/2) = 2(n/2)^2 \leq (1/2) f(n)$, thus $T(n) = \Theta(n^2)$

Applications of D&C

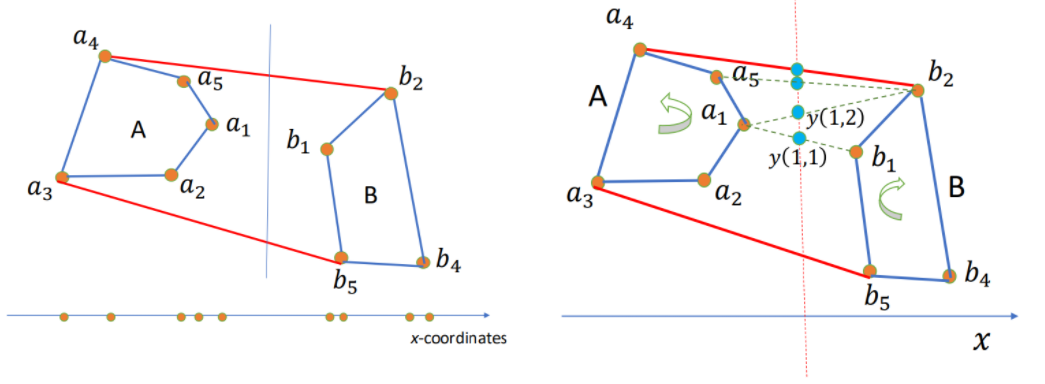

Convex Hull

Given $n$ points $S = { (x_i, y_i) | i = 1, 2, \cdots, n }$ s.t. all have different $x$ and $y$ coordinates, and no three of them in a line.

Convex Hull (CH): The smallest polygon containing all points in $S$. Represented by the sequence of points in the boundary in clockwise order.

How to use D&C here?

- Sort points by $x$-coordinate.

- For our input set $S$ of points:

- Divide (by $x$-coordinates) into left-half A and right-half B.

- Compute CH(A) and CH(B).

- Merge CH(A) and CH(B) to get CH(A+B).

It has the complexity time $T(n) = 2T(n/2) + \Theta(n) \rightarrow \Theta(n \log n)$.

BUT, how to merge?

Algorithm: Start with $(a_1, b_1)$. While $y(i, j)$ increases, keep moving by one step either a_i counterclockwise or b_j clockwise. When cannot increase anymore, we found the upper tangent, say $a_u, b_u$. Similarly, the lower tangent $a_l, b_l$. Then the CH = points of A clockwise from $a_l$ to $a_u$ + points of B clockwise from $b_u$ + $b_l$. Hence total time $\Theta(n)$

Median Finding / Peak Finding

Not interested.

Fast Fourier Transform

Multiplication of polynomials as list of coefficients.

Consider: $$ \begin{align*} A(x) &= a_0 + a_1 x + \cdots + a_n x^n = \sum_{i=0}^{n}a_i x^i \\ B(x) &= b_0 + b_1 x + \cdots + b_n x^n = \sum_{i=0}^{n}b_i x^i \end{align*} $$ To calculate: $$ \begin{align*} C(x) &= A(x) B(x) = c_0 + c_1 x + \cdots + c_{2n}x^{2n} =\sum_{i=0}^{2n}c_i x^i \\ \text{where }c_k &= a_0 b_k + a_1 b_{k-1} + \cdots + a_k b_0 = \sum_{i=0}^k a_i b_{k-i} \end{align*} $$

Complexity: For $c_k \rightarrow O(k)$, for $C(x) \rightarrow O(n^2)$

Can we do it faster? Like $O(n \log n)$?

Multiplication of polynomials as list of values.

Consider: A degree-$n$ polynomial is uniquely characterized by its values at any $n+1$ distinct points $x_0, x_1, \cdots, x_d$.

That is, if we fix any distinct $n+1$ points, we can specify any degree-$n$ polynomial $A(x) = a_0 + a_1 x + \cdots + a_d x^n$ by the $n+1$ values: $$ A(x_0), A(x_1), \cdots, A(x_n) $$ Then we can have: $$ \begin{align*} C(x) &= A(x) B(x) \\ C(x_i) &= A(x_i) B(x_i) \\ \text{where } i&=0,1,\cdots,2n \end{align*} $$ Polynomial multiplication is $O(n)$ using this representation!

But how can we get the coefficients of $C(x)$ ?

Evaluation and Interpolation

Evaluation: $a_0, a_1, \cdots, a_n \rightarrow A(x_0), A(x_1), \cdots, A(x_n)$

$$ A(x) = M_n(x) \cdot a \\ $$ $$ \begin{bmatrix} A(x_0) \\ A(x_1) \\ \vdots \\ A(x_{n}) \end{bmatrix}= \begin{bmatrix} 1 & x_0 & x_0^2 & \cdots & x_0^n \\ 1 & x_1 & x_1^2 & \cdots & x_1^n \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 1 & x_n & x_n^2 & \cdots & x_n^n \end{bmatrix} \cdot \begin{bmatrix} a_0 \\ a_1 \\ \vdots \\ a_{n} \end{bmatrix} $$

Interpolation: $A(x_0), A(x_1), \cdots, A(x_n) \rightarrow a_0, a_1, \cdots, a_n$ $$ a = M_n^{-1}(x) \cdot A(x) \\ $$ $$ \begin{bmatrix} a_0 \\ a_1 \\ \vdots \\ a_n \end{bmatrix}= \begin{bmatrix} 1 & x_0 & x_0^2 & \cdots & x_0^n \\ 1 & x_1 & x_1^2 & \cdots & x_1^n \\ \vdots & \vdots & \vdots & \ddots & \vdots \\ 1 & x_n & x_n^2 & \cdots & x_n^n \end{bmatrix} ^{-1} \cdot \begin{bmatrix} A(x_0) \\ A(x_1) \\ \vdots \\ A(x_n) \end{bmatrix} $$

Here $M_n$ is Vandermonde matrix.

Consider $C(x_i) = A(x_i) B(x_i)$, from the two matrix multiplication we can know that: If we have every $A(x_i)$ and $B(x_i)$ we can use $O(n)$ to multiply and get $C(x_i)$, then transfer it into every coefficient $c_i$.

But, using matrix multiplication directly will need time complexity of $O(n^2)$. Can we optimize it to $O(n \log n)$? Yes, and here comes Fast Fourier Tranform (FFT).

FFT

Let's calculate evaluation using FFT first.

Consider a polynomial $P(x)$ with length of even number $n$ s.t. $$ \begin{align*} P(x) &= a_0 + a_1 x + a_2 x^2 + \cdots + a_{n-1} x^{n-1} \\ &= P_{\text{even}}(x^2) + x \cdot P_{\text{odd}}(x^2) \\ \text{where } P_{\text{even}}(x) &= a_0 + a_2 x + a_4 x^2 + \cdots + a_{n-2} x^{n/2} \\ P_{\text{odd}}(x) &= a_1 + a_3 x + a_5 x^2 + \cdots + a_{n-1} x^{n/2} \end{align*} $$

By doing this, we divide the the length $n$ to two length $n/2$. Then, we have to conquer them each and then combine them together.

Let $A_k$ denote $A(x_k)$.

The question is: How can we divide $A_k$?

And here's the most essencial part: We use primitive root $\omega$.

Now, think about an complex equation: $$ z^n = (re^{i\theta})^n = 1 $$ We can get the solutions: $$ \begin{align*} r &= 1 \\ \theta &= \frac{2k\pi}{n} \\ \text{where } k &= 0, 1, \cdots, n-1 \end{align*} $$ Thus, $$ \begin{align*} \omega_n &= e^{\frac{2\pi}{n}i} \\ \omega_n^k &= e^{\frac{2\pi}{n}ki} = e^{i\theta} \\ (\omega_n^k)^n &= 1 \end{align*} $$ To each $k$, we want to calculate: $$ \begin{align*} A_k &= P(\omega_n^k) \\ &= P_{\text{even}}(\omega_n^{2k}) + \omega_n^k \cdot P_{\text{odd}}(\omega_n^{2k}) \\ &= P_{\text{even}}(\omega_{n/2}^{k}) + \omega_n^k \cdot P_{\text{odd}}(\omega_{n/2}^{k}) \end{align*} $$ Since $$ \omega_n^{2k} = e^{\frac{2\pi}{n} 2ki} = e^{\frac{2\pi}{n/2} ki} = \omega_{n/2}^k $$ This is called the Discrete Fourier Tranform (DFT) of $A_k$.

And we found that: $$ \begin{align*} A_{k+n/2} &= P(\omega_n^{k+n/2}) \\ &= P_{\text{even}}(\omega_{n/2}^{k+n/2}) + \omega_n^{k+n/2} \cdot P_{\text{odd}}(\omega_{n/2}^{k+n/2}) \\ &= P_{\text{even}}(\omega_{n/2}^{k} \cdot \omega_{n/2}^{n/2}) + \omega_n^k \cdot \omega_n^{n/2} \cdot P_{\text{odd}}(\omega_{n/2}^{k} \cdot \omega_{n/2}^{n/2}) \\ &= P_{\text{even}}(\omega_{n/2}^{k}) - \omega_n^k \cdot P_{\text{odd}}(\omega_{n/2}^{k}) \end{align*} $$ Since $$ \omega_n^n = e^{\frac{2\pi}{n}ni} = e^{2\pi i} = 1 \\ \omega_n^{n/2} = e^{\frac{2\pi}{n} (n/2)i} = e^{\pi i} = -1 $$

Combining $A_k$ and $A_{k+n/2}$ together for $0 \leq k < n/2$, we can do D&C here! Since $P_{\text{even}}(\omega_{n/2}^{k})$ and $P_{\text{odd}}(\omega_{n/2}^{k})$ can calculate from the half DFT.

The time complexity is $T(n) = 2T(n/2) + O(n) = O(n \log n)$. We get the whole evaluation.

IFFT

To do interpolation, we need Inverse Fast Fourier Tranfrom (IFFT).

Inverse FFT is easy to explain using Linear Algebra.

It's easy since: $$ a = M_n^{-1}(\omega) \cdot A(\omega) = \frac{1}{n} M_n(\omega^{-1}) \cdot A(\omega) $$ And it's over.

Let $A(\omega) = \text{FFT}(a, \omega)$, then $a = \frac{1}{n} \text{FFT}(A, \omega^{-1})$.

The Whole Progress

- Filled $A(x)$ and $B(x)$ to length of $n=2^k$ by $0$ due to easier FFT D&C.

- Calculate $A(\omega^k)$ and $B(\omega^k)$ for each $k=0,1,\cdots,n-1$, where $\omega = e^{\frac{2\pi}{n}i}$ using FFT.

- Calculate $C(\omega^k) = A(\omega^k) \cdot B(\omega^k)$.

- Calculate $c_i$ using IFFT.

L3 Randomized Algorithms

Basic Concepts of Randomized Algoirthms

Randomized Algorithms: On the same input, different executions of the same algorithm may result in different running times and different outputs.

Type of polynomial randomized algorithms:

- Monte Carlo: Bounded number of steps, but might not find the answer.

- Runs in polynomial time, $P_r$(answer is correct)$=p_o > \frac{1}{2}$.

- Always able to check the answer for correctness.

- How to use: Repeat if neccessary, use majority of answers.

- Las Vegas: No guarantee on max number of steps, always finds answer.

- Runs in expected polynomial time.

- Not stop until finds the correct answer.

$\overline{T}(n)$: Expected time to terminate for a given input of size $n$.

Quicksort

Consider combining D&C and randomize

- Pick an pivot $x$ of the array.

- Divide: Partition the array into subarrays using $x$.

- Conquer: Recursively sort $A$, $B$.

- Combine: $A$, $x$, $B$.

$\overline{T}(n) = \Theta(n \log n)$ for all inputs. $\Rightarrow$ Random selection of $x$ makes no input to be worse.

Or we can say $\Theta(n \log n)$ is the average complexity of quicksort.

Frievald's Algorithm

Given $n \times n$ matrices $A$, $B$ and $C$, check that $AB = C$ (yes or no).

It's very slow to use brute force algorithm since matrices multiplication is $O(n^3)$.

Consider randomization (Frievald's Algorithm):

- Choose a random binary vector $r$.

- If $(A(Br) = Cr) \bmod 2$ then output "yes" else "no".

Property: If $AB \not= C$, then $\text{Pr}[A(Br) \not= Cr] \geq \frac{1}{2}$.

That is, Do 10 tests, if all say "yes", the probability of error $< \left( \frac{1}{2} \right)^{10}$.

L4 Lower Bounds and Heaps

Comparison Model

Comparsion Sort

All the sorting algorithms we've seen so far are comparison sort (merge sort, insertion sort, bubble sort, etc.).

And the best running time that we've seen for comparison sort is $O(n \log n)$. Is it the best we can do?

Decision-Tree

A given recipe for sorting $n$ numbers $a_1, a_2, \cdots, a_n$.

Nodes are suggested comparisons: $i, j$ means compare $a_i$ to $a_j$ for $i, j \in \{1, 2, \cdots, n\}$

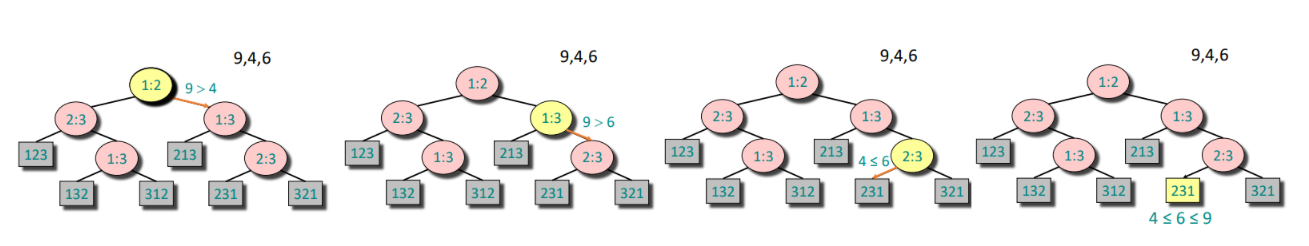

The figure below is an example of decision-tree of sorting $a_1 = 9, a_2 = 4, a_3 = 6$.

From the tree we can see that:

- A path from the root to the leaves of the tree represents a trace of comparisons that the algorithm may perform.

- The running time of the algorithm = the length of the path taken.

- Worst-case running time = height(depth) of tree

The tree must contain $\geq n!$ leaves since there are $n!$ possible permutations. And a height-$h$ binary tree has $L_h \leq 2^h$ leaves.

Hence: $$ n! \leq L_h \leq 2^h \iff h > \log(n!) \iff h = \Omega(n \log n) $$

Since the Stirling's approximation: $$ n! \approx \sqrt{2 \pi n} \left(\frac{n}{e}\right)^n \\ \log(n!) = n \log n - n + \frac{1}{2} \log (2 \pi n) $$

Theorem: Any decision tree that can sort $n$ elements must have height $\Omega(n \log n)$.

Linear Time Sorting

As metioned above, the comparison sorting have a lower-bound complexity of $\Omega(n \log n)$, so do linear time sorting exist?

Counting Sort

Given: An array $a_0, a_1, \cdots, a_{n-1}$ of $n$ keys to be sorted.

Count the times of number appeared between $a_{min}$ and $a_{max}$, then use the prefix sum array to get the final place of each element. With time complexity of $O(n+k), k = a_{max} - a_{min} + 1$

void counting_sort(vector<int>& a) {

int n = a.size();

int mn = *min_element(a.begin(), a.end());

int mx = *max_element(a.begin(), a.end());

int k = mx - mn + 1;

vector<int> count(k, 0);

for (int x : a) count[x - mn]++;

for (int i = 1; i < k; i++) count[i] += count[i - 1];

vector<int> res(n);

for (int i = n - 1; i >= 0; i--) {

int x = a[i];

res[--count[x - mn]] = x;

}

a = res;

}

Radix Sort

Use a radix(base) $k$ for an integer $x$: $$ x = a_{d-1} k^{d-1} + \cdots + a_0 k^0 \to a_{d-1}a_{d-2}\cdots a_0 $$

Idea: Sort on least-significant digit first with auxiliary stable sort.

We are given $n$ integers, each integer $< M$, in base $k$. Then we have time complexity of $O((n+k) \log_k M)d$

Heaps

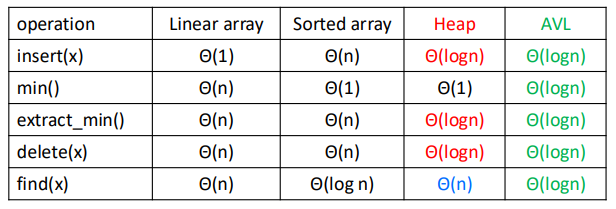

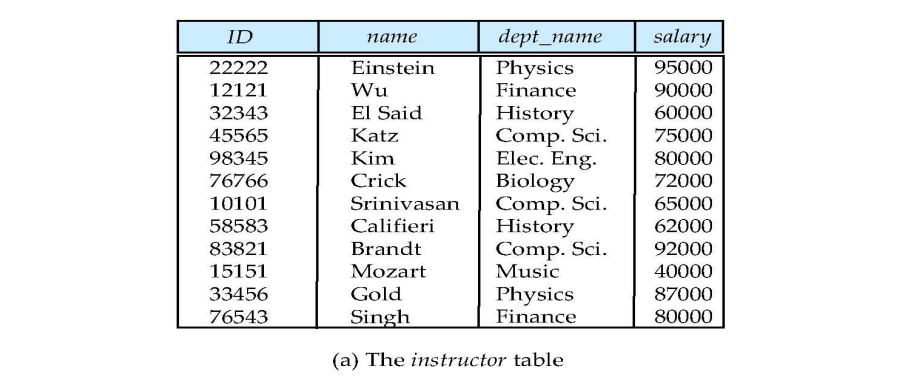

Storing Objects in Data Structures

We operate on objects of the form: object $x = (x.\text{key}, x.\text{data})$.

The attribute $x.\text{key}$ characterizes uniquely the object $x$. And we store $x.\text{key}$ combined with a pointer to the location of $x.\text{data}$ in memory.

Inserting and searching for object $x$ is done by inserting and searching for $x.\text{key}$. Or we can say we mostly focus on $x.\text{key}$ instead of $x.\text{data}$.

Priority Queue

$S$: set of elements $\{ x_1, x_2, \cdots, x_n \}$.

$x_i = (\text{key}_i, \text{data}_i)$.

Operations: $\text{Insert}(S,x), \max(S), \text{Extract-max}(S), \text{Incr-key}(S, x, k)$.

Heap

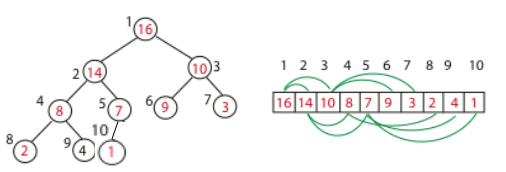

Heap: An array visualized as a nearly complete binary tree.

Navigate on the tree but move on array = full info of the tree, without pointers.

Max(Min) Heap Property: the key of a node is $\geq (\leq)$ than the keys of its children.

Binary Tree Structure in array, as shown above.

int s[MAXN], sz;

int parent(int i) {

return i >> 1; // i/2

}

int left(int i) {

return i << 1; // i*2

}

int right(int i) {

return i << 1 | 1; // i*2+1

}

And the height of the tree is $h = \log n$.

Max Heapify: Correct a single violation of the heap property occurring at the root $i$ of a subtree in $O(\log n)$.

void max_heapify(int i) {

int l = left(i), r = right(i), maxi = i;

if(l <= sz && s[l] > s[maxi]) maxi = l;

if(r <= sz && s[r] > s[maxi]) maxi = r;

if(maxi != i) {

swap(s[i], s[maxi]);

max_heapify(maxi);

}

}

Build Max Heap: Convert an array $A$ into a max heap. And we find that elements from $A_{\lfloor n/2 \rfloor+1}$ to $A_n$ are leaves of the tree, and they won't violate the rule. So we only need to heapify $A_1$ to $A_{\lfloor n/2 \rfloor}$. The time complexity is $O(n)$.

void build_max_heap(int n) {

sz = n;

for(int i = n/2; i >= 1; i--) max_heapify(i);

}

Insert: We first put it at the back of the array, then go up in the tree one level by one level until no violation.

void insert(int val) {

sz++;

s[sz] = val;

int i = sz;

while(i > 1 && s[parent(i)] < s[i]) {

swap(s[i], s[parent(i)]);

i = parent(i);

}

}

Extract Max: The max element must be the first one of the array, we can just swap it with the last element, then heapify.

int extract_max() {

if(!sz) return -1;

int maxn = s[1];

s[1] = s[sz];

sz--;

max_heapify(1);

return maxn;

}

Heap Sort: Like extracting max element for $n$ times. Time complexity $O(n \log n)$.

void heap_sort() {

int n = sz;

for(int i = n; i >= 2; i--) {

swap(s[1], s[i]);

sz--;

max_heapify(1);

}

}

L5 Trees

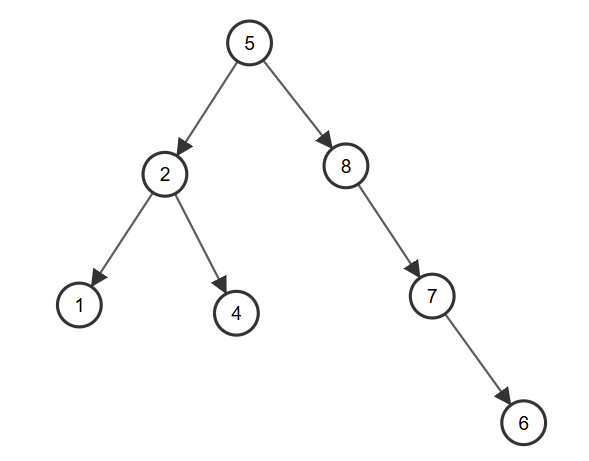

Binary Search Tree

A Binary Search Tree(BST) is a binary tree structure that every node $x$ of it must satisfies:

- All $\text{key}$ from its left subtree is less than $x.\text{key}$

- All $\text{key}$ from its right subtree is greater than $x.\text{key}$

And we can easily know that the left subtree and right subtree of $x$ are both BST.

Define Node: Using C++ struct and pointers.

struct node {

int key;

node* left;

node* right;

node(int k): key(k), left(NULL), right(NULL) {}

};

Insert: Start from the root, if the $\text{key}$ is less, put it to the left, else to the right. Doing the whole progress in recursion, until find an empty place and put it in. With average time complexity $O(\log n)$, but worst $O(n)$.

node* insert(node* root, int key) {

if(!root) return new node(key);

if(key < root->key) {

root->left = insert(root->left, key);

} else if(key > root->key) {

root->right = insert(root->right, key);

}

return root;

}

Build Tree: Actually there's no such a thing, you just need to insert the new node into root.

node* root = NULL;

root = insert(root, a);

Inorder Traversal: The inorder traversal of the BST is a sorted array, due to the property of it.

void printTree(node* root) {

if(!root) return;

printTree(root->left);

cout << root->key << " ";

printTree(root->right);

}

Search: Tipical binary search on tree.

node* search(node* root, int key) {

if(!root || root->key == key) {

return root;

}

if(key < root->key) {

return search(root->left, key);

} else {

return search(root->right, key);

}

}

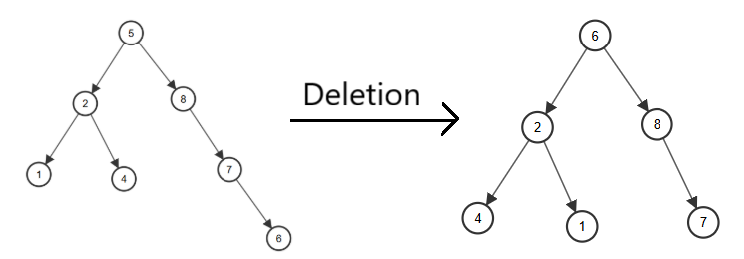

Delete: A bit complicated.

- Leaf (no children): Delete directly.

- On child: Use the child to replace it.

- Two children: Find the minimum of right subtree, replace it with the element we want to delete, and delete it.

node* deleteNode(node* root, int key) {

if(!root) return NULL;

if(key < root->key)

root->left = deleteNode(root->left, key);

else if(key > root->key)

root->right = deleteNode(root->right, key);

else {

if(!root->left && !root->right) {

delete root;

return NULL;

} else if(!root->left) {

node* temp = root->right;

delete root;

return temp;

} else if(!root->right) {

node* temp = root->left;

delete root;

return temp;

} else {

node* temp = findMin(root->right);

root->key = temp->key;

root->right = deleteNode(root->right, temp->key);

}

}

return root;

}

Warning: We usually use the different key to insert, but if there exists two same keys, we can do a count, or change the right subtree to greater or equal subtree.

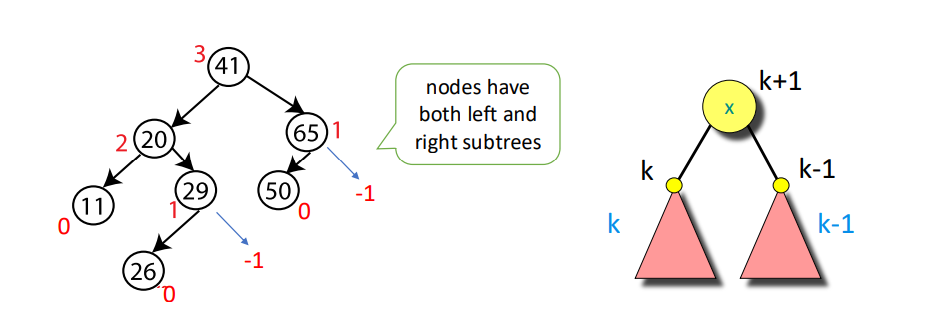

AVL Tree

An AVL (Adelson-Velskii and Landis) Tree is a self-balancing binary search tree.

Property: For every node, store its height:

- Leaves have height $0$

- NIL has height $-1$

Invariant: For every node $x$, the heights of its left child and right child differ by at most $1$.

And we can know that AVL trees always have height $\Theta(\log n)$.

Define Node: Like BST, but with height.

struct node {

int key, height;

node* left;

node* right;

node(int k): key(k), height(1), left(NULL), right(NULL) {}

};

Get Height: For convenience, I take leaves height as $1$, NIL height as $0$.

int getHeight(node* u) {

return u ? u->height : 0;

}

Get Balance: Use the definition of balance, $\text{BL} = \text{height}(\text{left subtree}) - \text{height}(\text{right subtree})$, notice that if $\text{BL} > 1$, left subtree is heavy, else if $\text{BL} < 1$, right subtree is heavy, else it's balanced at this node.

int getBalance(node* u) {

return u ? getHeight(u->left) - getHeight(u->right) : 0;

}

Right Rotate:

A B

/ \ / \

B T3 -> T1 A

/ \ / \

T1 T2 T2 T3

node* rightRotate(node* u) {

node* v = u->left;

node* w = v->right;

v->right = u;

u->left = w;

u->height = max(getHeight(u->left), getHeight(u->right)) + 1;

v->height = max(getHeight(v->left), getHeight(v->right)) + 1;

return v;

}

Left Rotate:

A B

/ \ / \

T1 B -> A T3

/ \ / \

T2 T3 T1 T2

node* leftRotate(node* u) {

node* v = u->right;

node* w = v->left;

v->left = u;

u->right = w;

u->height = max(getHeight(u->left), getHeight(u->right)) + 1;

v->height = max(getHeight(v->left), getHeight(v->right)) + 1;

return v;

}

Insert: Consider insertion with 4 situations (L and R means insert into left and right subtree):

- LL: Do right rotation once.

- RR: Do left rotation once.

- LR: Do left rotation, then right rotation.

- RL: Do right rotation, then left rotation.

node *insert(node* u, int key) {

if(!u) return new node(key);

if(key < u->key) u->left = insert(u->left, key);

else if(key > u->key) u->right = insert(u->right, key);

else return u;

u->height = max(getHeight(u->left), getHeight(u->right)) + 1;

int BL = getBalance(u);

if(BL > 1 && key < u->left->key) return rightRotate(u); // LL

if(BL < -1 && key > u->right->key) return leftRotate(u); // RR

if(BL > 1 && key > u->left->key) { // LR

u->left = leftRotate(u->left);

return rightRotate(u);

}

if(BL < -1 && key < u->right->key) { // RL

u->right = rightRotate(u->right);

return leftRotate(u);

}

return u;

}

Delete: As same as deletion in BST, then check whether it's balanced.

node* findMin(node* u) {

while(u->left) u = u->left;

return u;

}

node* deleteNode(node* u, int key) {

// BST Deletion first

if(!u) return NULL;

if(key < u->key) u->left = deleteNode(u->left, key);

else if(key > u->key) u->right = deleteNode(u->right, key);

else {

if(!u->left || !u->right) {

node* temp = u->left ? u->left : u->right;

if(!temp) {

temp = u;

u = NULL;

} else {

*u = *temp;

}

delete temp;

} else {

node* temp = findMin(u->right);

u->key = temp->key;

u->right = deleteNode(u->right, temp->key);

}

}

if(!u) return NULL;

// Keep balance

u->height = max(getHeight(u->left), getHeight(u->right)) + 1;

int BL = getBalance(u);

if(BL > 1 && getBalance(u->left) >= 0)

return rightRotate(u); // LL

if(BL > 1 && getBalance(u->left) < 0) {

u->left = leftRotate(u->left);

return rightRotate(u); // LR

}

if(BL < -1 && getBalance(u->right) <= 0)

return leftRotate(u); // RR

if(BL < -1 && getBalance(u->right) > 0) {

u->right = rightRotate(u->right);

return leftRotate(u); // RL

}

return u;

}

Time Complexity: In balanced BST, all operations take $O(\log n)$.

Red-Black Tree

Shit.

Interval Tree

Interval Tree is a balanced BST but nodes are intervals.

Define Node: Interval has $l$ and $r$, while $\text{maxn}$ is the maximum $r$ of all interval of the whole subtree, and we use $l$ as the $\text{key}$ of BST.

struct interval {

int l, r;

};

struct node {

interval i;

int maxn;

node* left;

node* right;

node(interval in): i(in), maxn(in.r), left(NULL), right(NULL) {}

};

Insert: Like regular BST, using $l$ as the $\text{key}$, and record $\text{maxn}$.

node* insert(node* u, interval i) {

if(!u) return new node(i);

if(i.l < u->i.l) u->left = insert(u->left, i);

else u->right = insert(u->right, i);

u->maxn = max(u->maxn, i.r);

return u;

}

Search: To get all the intervals who are overlapping with $i$, there are 3 situations

- If the node has overlap with $i$, record the answer.

- If its left subtree's $\text{maxn}$ is greater than $i.l$, search in the left subtree.

- If the node's $l$ is less than $i.r$, search in the right subtree.

bool isOverlap(interval a, interval b) {

return a.l <= b.r && a.r >= b.l;

}

void searchAll(node* u, interval i, vector<interval>& re) {

if(!u) return;

if(isOverlap(u->i, i)) re.push_back(u->i);

if(u->left && u->left->maxn >= i.l) searchAll(u->left, i, re);

if(u->right && u->i.l <= i.r) searchAll(u->right, i, re);

}

The regular Interval Tree is like BST with worst time complexity $O(n)$ for each operation, but it can be optimized by AVL tree or other balanced tree, reducing the time complexity to $O(\log n)$.

vEB Tree

An vEB (van Emde Boas) Tree is a data structure that can support below functions in a integer set $S \subseteq \{ 0, 1, \cdots, U-1 \}$, while $U$ is the range of integers (e.g. $U=2^{16}$)

L6 Hashing

Basic Concepts of Hashing

Hash Function: A function that maps data of arbitrary size to fixed-sized values. And the goal is to propose a data structure such that for key $k$:

- $\text{add}(k), \text{delete}(k) = O(1)$

- $\text{search}(k) = O(1)$ on the average

From what I've learnt, the most important thing is not the precise hashing function itself, but the critical thought of hashing.

Hash Table: A table that stores indice and values of hashing.

Making Choices

Choosing the Keys:

- By Nature: Randomly from $U$. (Simple uniform hashing)

- By an Opponent: That knows the hash function we use when this is fixed, in a way to create the longest possbile chain. (Universal hashing)

Choosing the Hash Funcions:

- We use a fixed hash function everyone knows.

- We change before each experiments the hash function, chosen randomly from a "universal set" of functions known to everyone.

Special Case (perfect hashing): We know the keys, then choose the hash function.

Performance: Average length of chain in a typical slot (same hashing value).

Models for Randomness

Simple Uniform Hashing Assumption (SUHA)

$h$ is fixed: In every experiment each key $k$'s value is chosen randomly (uniformly) from $U$.

- Then, $h$ is "good" if $h(k)$ scatters uniformly over the hash table: $$ P_k[h(k) = i] = \frac{1}{m}, \forall i = 1, 2, \cdots, m $$

- We call this the Simple Uniform Hashing Assumption.

Universal Hashing

The set of keys to be stored is assumed fixed: In every experiment, $h$ is randomly (uniformly) chosen from a set $H$.

- Then, $H$ is "good" if $$ P_h[h(k_1) = h(k_2)] \leq \frac{1}{m}, \forall k_1 \not= k_2 $$

- $H$ is a set of Universal Hash Funcions

Actually, Universal Hashing is not a hash function, but designing a set of hash functions. It will randomly choose a hash function when using. Or we can san Universal Hashing is using SUHA by randomization, in order to decrese the probability of collisions.

Universal Hashing Function Examples

The Matrix Method

Settings:

- Keys are $u$-bits long, $|U| = 2^u$

- Hash values are $b$-bits long, hash table size $m = 2^b$

And we pick $h = $ random $b \times u$ $0/1$ matrix, define: $$ h(x) = h \cdot x \pmod 2 $$

Which means matrix multiplication under module $2$.

Claim: For $x \not= y, P_h[h(x) = h(y)] \leq \frac{1}{m} = \frac{1}{2^b}$

e.g. $$ x = 2 = \begin{bmatrix} 1 \\ 0 \\ 1 \\ 0 \end{bmatrix}, h = \begin{bmatrix} 1 & 0 & 0 & 0 \\ 0 & 1 & 1 & 1 \\ 1 & 1 & 1 & 0 \end{bmatrix} $$ And we get $$ h(x) = h \cdot x = \begin{bmatrix} 1 \\ 1 \\ 0 \end{bmatrix} = 6 $$

Linear Hashing for Vector Keys

Settings:

- Suppose keys are vectors $x = (x_1, x_2, x_3, x_4)$, where $x_i$ is an $8$-bit integer. (i.e. $0 \leq x \leq 255$)

- We use a hash table of size $m = p$, where $p$ is a prime number slightly greater than $255$ (e.g. $257$).

Choose the vector of coefficients $a = (a_1, a_2, a_3, a_4)$ where $a_i$ is (uniformly) randomly selected in the range $\{0, 1, \cdots, m-1\}$ And we define: $$ h_a(x) = \left( \sum_{i=1}^4 a_i x_i \right) \bmod m $$

So the hash family is $$ H = \{ h_a : a \in [0, m-1]^4 \} $$

Claim: For $x \not= y, P_a[h_a(x) = h_a(y)] = \frac{1}{m}$

Affine Hashing for Integer Keys

Also called the Carter-Wegman construction

Settings:

- Keys are integers $k \in U$

- Pick a prime number $p$ s.t. $|U| \leq p \leq 2|U|$

- Table size $m \leq p$

We radomly choose $a \in \{ 1, 2, \cdots, p-1 \}, b \in \{ 0, 1, \cdots, p-1 \}$, and define: $$ h_{a,b}(k) = ((a \cdot k + b) \bmod p) \bmod m $$

Claim: For $x \not= y, P_{a,b}[h_{a,b}(x) = h_{a,b}(y)] \leq \frac{1}{m}$

e.g. $|U| = 2^4 = 16, p = 17, m = 6, k = 8, a = 3, b = 4$, then: $$ h_{3,4}(8) = ((3 \cdot 8 + 4) \bmod 17) \bmod 6 = 5 $$

Rolling Hash

Sliding window, but in hashing.

Rabin-Karp Algorithm

Consider a string $S = s_0 s_1 s_2 \cdots s_{n-1}$. We define a window of length $k$ s.t. $T = s_i s_{i+1} \cdots s_{i+k-1}$. And we use the polynomial hash function: $$ H_i = H(T) = (s_i \cdot p^{k-1} + s_{i+1} p^{k-2} + \cdots + s_{i+k-1} \cdot p^0) \bmod M $$ Where $p$ is the base, $M$ is a large prime number and $s_i$ is the ASCII number.

From the function above we can get: $$ H_{i+1} = ((H_i - s_i \cdot p^{k-1}) \cdot p + s_{i+k}) \bmod M $$

And then we can $O(1)$ check whether a string of length $k$ is appeared in $S$.

Table Doubling

We define Load Factor: $$ \alpha = \frac{n}{m} $$ Where $n$ is the element number in hash table, $m$ is the bucket number of hash table.

We have known that we need a hash table to store the keys and values, but if the element number in table is too large, it will cause more and more collisions, which slowing down the hashing progress.

So if $\alpha$ is too large (usually $1$), we will double the bucket number $m$, then rehash all the elements into new table.

Average Cost of Insertions $=\frac{2^{i+2}c + 2^i c_0}{2^i} = O(1)$

Or observe that up to any time $t$: by paying $O(1)$ per insert, covers at all times the total actual cost. We say that insert has an amortized cost of $O(1)$.

Perfect Hashing

Bloom Filters

L7 Amortized Analysis

Why Amortized Complexity?

Think about doubling hash tables, some of the functions are cheap, like $O(1)$ calculate hashing, but some are expensive, like $O(n)$ to doubling hash tables.

Amortized analysis is a way to make sure each function is in an average time, no need to assume randomized input.

Actual Cost and Amortized Cost

$c(t) = $ actual cost of the operation at time $t$. It can be a function of the state of the data structure, e.g. depends on $n$ = number of elements stored at time $t$.

$a(t) = $ amortized cost of the operation at time $t$. Assume $a(t) = O(1) \ \forall t$, i.e., $a(t) \leq c_a \ \forall t$

Suppose that we have shown that on any trajectory $0, 1, \cdots, T$, $$ \sum_{0 \leq t \leq T} c(t) \leq \sum_{0 \leq t \leq T} a(t) $$

Which means at any operation, the total actual cost $\leq$ the total budget cost we assigned. That is, our budget is enough to cover all the cost.

For any length $T$, $$ \sum_{0 \leq t \leq T} c(t) \leq \sum_{0 \leq t \leq T} a(t) \leq c_a T $$

Amortization Methods

Aggregate Method

The easiest way of amortization.

$$ \text{amortized cost per op} = \frac{\text{total cost of } k \text{ ops}}{k} $$

Amortized Bound

Define an amortized cost $a_op(t) = O(1)$ to each operation type s.t. for any possible sequence of operations, the total cost is covered. That is, $$ \sum(\text{amortized costs}) \geq \sum(\text{actual costs}) $$

Methods: Accounting method, Charging method, Potential method.

L8 Graphs

Graph Definition

$G = (V, E)$

$V$: A set of vertices. $|V|$ usually denoted by $n$.

$E \subseteq V \times V$: A set of edges (pair of vertices). $|E|$ usually denoted by $m$.

$m \leq n^2 = O(n^2)$

Two flavors:

- Directed graph: Edges have order.

- Undirected graph: Ignore order. Then only $\frac{n(n-1)}{2}$ possible edges.

Problems Relates to Graphs

Reachability: Find all states reachable from a given state.

Shortest Paths: Find the shortest path between state $s_0$ and $s_d$.

Cycle: Find if a directed graph has a cycle.

Topological Sort: Find a causal ordering of the nodes if it exists.

Minimum Spanning Tree: Find the minimum subgraph that contains all nodes.

Strongly Connected Components: Maximal sets of nodes that from every node can reach any other node in the set (mixing).

Graph Representation

Four representations with pros/cons:

- Adjacency Lists (of neighbors for each vertex)

- Incidence Lists (of edges from each vertex)

- Adjacency Matrix (0-1, which pairs are adjacent)

- Implicit representation (as a find neighbor function)

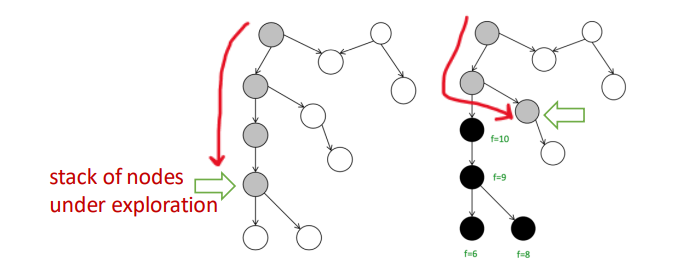

DFS

Depth-First Search (DFS) is a versatile linear-time procedure that reveals a wealth of information about a graph.

int n, m;

vector <int> g[MAXN];

int vis[MAXN]; // 0: WHITE, 1: GREY, 2: BLACK

int d[MAXN], f[MAXN], cnt;

void dfs(int u) {

cnt++;

d[u] = cnt;

vis[u] = 1;

for(int x = 0; x < g[u].size(); x++) {

int v = g[u][x];

if(!vis[v]) dfs(v);

if(vis[v] == 1) cout << "Cycle!" << endl;

}

cnt++;

f[u] = cnt;

vis[u] = 2;

}

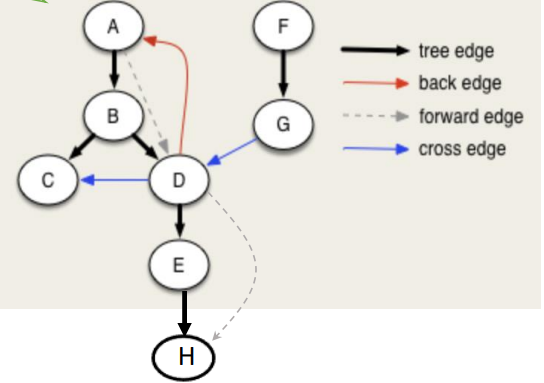

Properties of DFS

When DFS a graph, we will record two time stamp of each vertex:

- $d[u]$: Discover Time, when $u$ is first visited.

- $f[u]$: Finish Time, when we finish exploring all neighbors of $u$.

Of course, it's easy to see that $u.d < u.f$

Then we can get $4$ different type of edges, for a $u \to v$:

- Tree Edge: When we first discover a new vertex $v$ from $u$.

- $u.d < v.d < v.f < u.f$

- Back Edge: An edge that points back to an ancestor in the DFS tree.

- $v.d < u.d < u.f < v.f$

- Forward Edge: An edge that goes from a node to its descendant in the DFS tree, but not a direct tree edge.

- $u.d < v.d < v.f < u.f$

- Cross Edge: An edge that goes between two different DFS branches (neither ancestor nor descendant).

- $v.d < v.f < u.d < u.f$

White Path Theorem

We colored the vertex in DFS:

- White: Not yet visited

- Gray: Currently being explored

- Black: Finished exploring

If, during a DFS, there is a path from a vertex $u$ to a vertex $v$ that only goes through white vertices, then $v$ will become a descendant of $u$ in the DFS tree.

We can use it to detect cycle in graph: When we see a back edge, or meet grey node, means we found a path from a node $u$ back to one of its ancestors $v$, and there is a cycle.

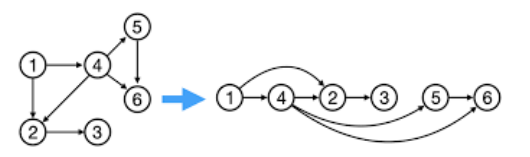

Topological Sort

Topological Sort of a DAG $G=(V,E)$.

Theorem: $G$ is a DAG iff there is a linear ordering of all its $v \in V$ s.t., if $(u, v) \in E$, then $u < v$ in the above ordering. Or we say $(u,v)$ goes always from left to right.

Algorithm: Given DAG $G$.

- Run any DFS($G$), compute finishing time of nodes.

- Linear order: The nodes in decreasing order of finishing times.

Corollary: In a DAG, $(u, v) \in E \iff u.f > v.f$ for any DFS.

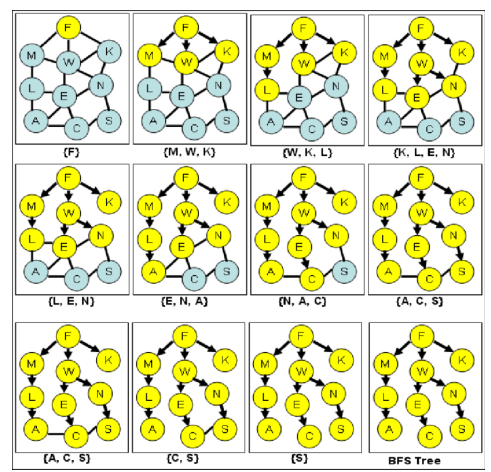

BFS

Breath-First Search (BFS) is searching neighbors around each node. And we usually use queue to implement.

Used very often in Shortest Path Algorithms.

int n, m;

vector <int> g[MAXN];

bool vis[MAXN];

void bfs(int st) {

queue <int> q;

q.push(st);

vis[st] = true;

while(!q.empty()) {

int u = q.front(); q.pop();

for(int x = 0; x < g[u].size(); x++) {

int v = g[u][x];

if(!vis[v]) {

vis[v] = true;

q.push(v);

}

}

}

}

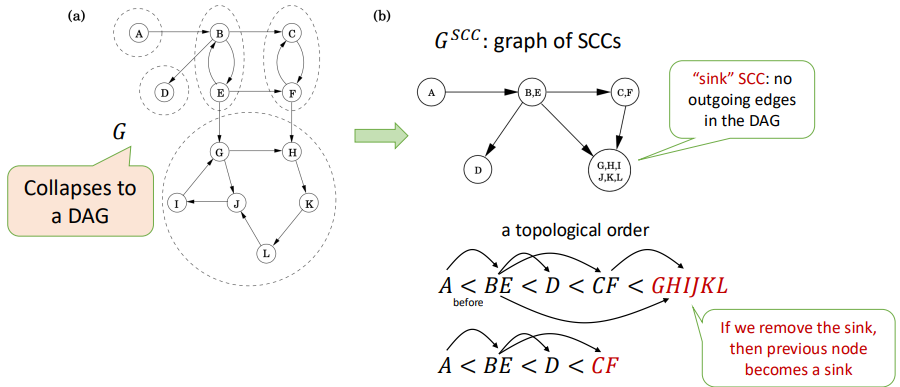

SCC

Strongly Connected Component (SCC): Maximal set of vertices $C \subseteq V$ such that for every pair of vertices $u, v \in C$, we have both $u \leadsto v$ and $v \leadsto u$.

$u \leadsto v$ means there is a path from $u$ to $v$.

Kosaraju's Algorithm

Goal: Find all SCCs.

Key Observation: If you collapse each SCC into a single node, the resulting graph is a DAG. This DAG has a topological order.

If you start a DFS on a node located in a sink SCC (an SCC with no outgoing edges in the SCC DAG), the DFS will visit all nodes within that SCC and then stop, without leaking into other SCCs. You could then remove this SCC and repeat the process.

Idea: Reverse the direction of all edges in $G$ to create the transpose graph $G^T$.

int n, m;

vector<int> g[MAXN]; // original graph

vector<int> gr[MAXN]; // reversed graph

int vis[MAXN];

int d[MAXN], f[MAXN], cnt;

vector<int> order; // store vertices by finish time

vector<int> component; // store one SCC

void dfs1(int u) {

vis[u] = 1;

cnt++;

d[u] = cnt;

for (int v : g[u]) {

if (!vis[v])

dfs1(v);

}

cnt++;

f[u] = cnt;